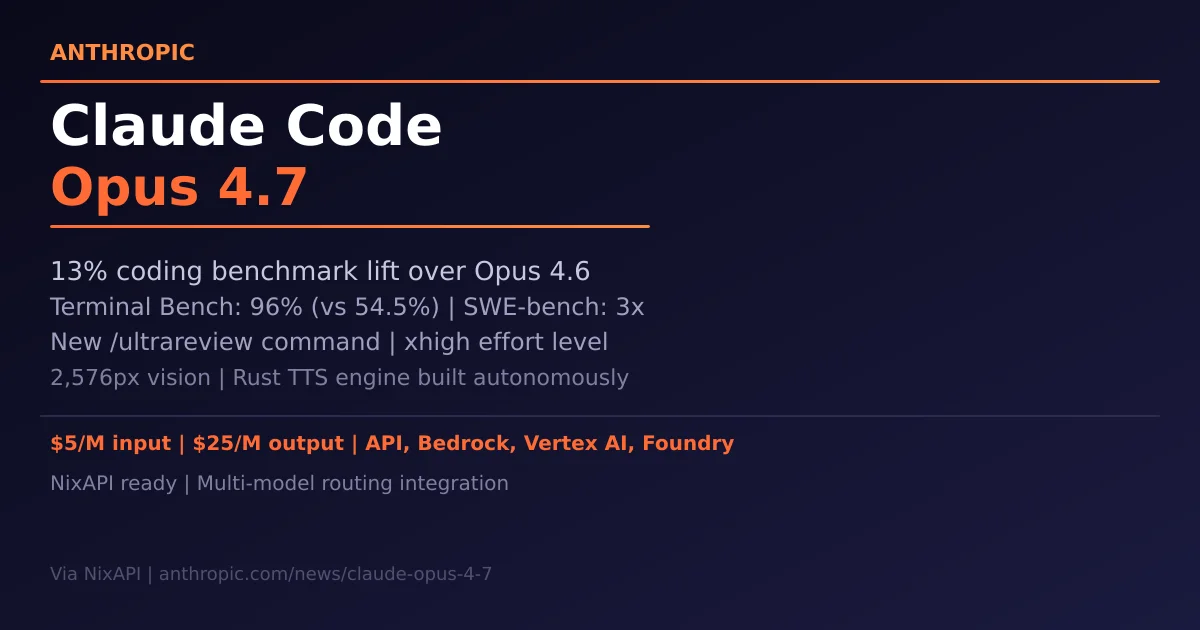

Claude Code Opus 4.7 Release: 13% Coding Lift, 3x SWE-bench Gains, and the /ultrareview Command

Anthropic has released Claude Code Opus 4.7, with a 13% improvement on a 93-task coding benchmark and 4 tasks neither Opus 4.6 nor Sonnet 4.6 could solve. Rakuten-SWE-Bench resolution is 3x higher, Terminal Bench reaches 96% (vs 54.5% for Opus 4.6), and a new xhigh effort level plus the /ultrareview slash command redefine AI code review. This article covers all technical upgrades, pricing, and NixAPI integration paths.

Note: All facts from Anthropic’s official release page (anthropic.com/news/claude-opus-4-7). No undisclosed information. Integration guidance based on public API docs.

1. Core upgrades: The numbers

Claude Opus 4.7 advances over Opus 4.6 across every major benchmark:

| Benchmark | Opus 4.6 | Opus 4.7 | Change |

|---|---|---|---|

| Internal 93-task coding eval | — | — | +13% |

| Rakuten-SWE-Bench | baseline | 3× | 3× |

| Terminal Bench 2.0 | 54.5% | 96% | +76% |

| Code Quality + Test Quality | — | double-digit | — |

| CursorBench | 58% | 70% | +21% |

| Databricks OfficeQA Pro errors | — | -21% | fewer errors |

| XBOW visual acuity | 54.5% | 98.5% | +81% |

| GDPval-AA (finance/legal/econ) | — | SOTA | — |

The headline demo: Opus 4.7 autonomously built a complete Rust text-to-speech engine from scratch — neural model, SIMD kernels, browser demo — then fed its own output through a speech recognizer to verify it matched the Python reference. Months of senior engineering work, delivered without human intervention. The codebase is public.

2. Architecture: Vision and tokenizer

3× higher image resolution

Opus 4.7 accepts images up to 2,576 pixels on the long edge (~3.75 megapixels) — more than three times prior Claude models. This enables:

- Computer-use agents reading dense screenshots and UI

- Data extraction from complex technical diagrams at pixel-perfect fidelity

- Life sciences patent workflows analyzing chemical structures and technical drawings

This is a model-level change: no API parameter changes needed; users’ existing image pipelines automatically benefit.

Updated tokenizer: 1.0–1.35× token inflation

The new tokenizer maps the same input to roughly 1.0–1.35× more tokens depending on content type. At higher effort levels, Opus 4.7 also thinks more — improving reliability on hard problems at the cost of more output tokens. Anthropic provides an official migration guide. Measure on real traffic before committing to the switch.

3. Security: First Project Glasswing model

Opus 4.7 is the first model to deploy under Project Glasswing: built-in safeguards that automatically detect and block requests indicating high-risk cybersecurity uses. This staged deployment builds real-world experience toward the eventual broad release of Mythos-class models. Security professionals with legitimate use cases (vulnerability research, penetration testing, red-teaming) can apply to the Cyber Verification Program.

4. New features: xhigh effort and /ultrareview

xhigh: New effort level between high and max

Opus 4.7 introduces xhigh (extra high) effort — a new tier between high and max — giving developers finer control over the reasoning-quality-vs-latency tradeoff. Claude Code has raised the default effort level to xhigh for all plans. For coding and agentic use cases, start with high or xhigh.

/ultrareview: Deep code review command

Claude Code introduces /ultrareview, a dedicated review session that reads through code changes and flags bugs and design issues that a careful human reviewer would catch. All Pro and Max users get three free ultrareviews to try it out.

Auto Mode extended to Max users

Auto mode (where Claude makes decisions on your behalf) now extends to Max users, enabling longer tasks with fewer interruptions.

5. Pricing and availability

Opus 4.7 is priced identically to Opus 4.6:

| Direction | Price |

|---|---|

| Input tokens | $5 / million |

| Output tokens | $25 / million |

Available on: Claude API (platform.claude.com), Amazon Bedrock, Google Vertex AI, Microsoft Foundry.

6. NixAPI integration

Opus 4.7 is the natural default for complex, long-running coding tasks in a multi-model gateway:

// providers/anthropic-opus47.ts

export const opus47 = createOpenAICompatibleClient({

baseURL: 'https://api.anthropic.com/v1',

apiKey: process.env.ANTHROPIC_API_KEY,

defaultModel: 'claude-opus-4-7',

defaultHeaders: {

'anthropic-version': '2023-06-01',

},

});

// Routing logic

export async function routeCodingTask(task: CodingTask) {

// Hard SWE tasks → Opus 4.7 xhigh

if (task.difficulty === 'hard' && task.type === 'swe') {

return opus47.chat(task.messages, { effort: 'xhigh' });

}

// Medium → Sonnet 4.6

if (task.difficulty === 'medium') {

return sonnet46.chat(task.messages, { effort: 'high' });

}

// Simple → MiniMax M2.7

return minimax27.chat(task.messages);

}

7. Key takeaway

Opus 4.7’s defining characteristic is reliability: fewer give-ups, fewer tool errors, less meaningless wrapper scaffolding. Hex put it precisely: “low-effort Opus 4.7 ≈ medium-effort Opus 4.6.” For NixAPI-style gateways, Opus 4.7 is the natural choice as the default for hard, long-horizon coding tasks — with Sonnet 4.6 as the cost-efficient mid-tier fallback.

Try NixAPI Now

Reliable LLM API relay for OpenAI, Claude, Gemini, DeepSeek, Qwen, and Grok with ¥1 = $1 top-up

Sign Up Free