DeepSeek V4 Review: Can the Open-Source Model Replace GPT-5? Cost Comparison and API Integration

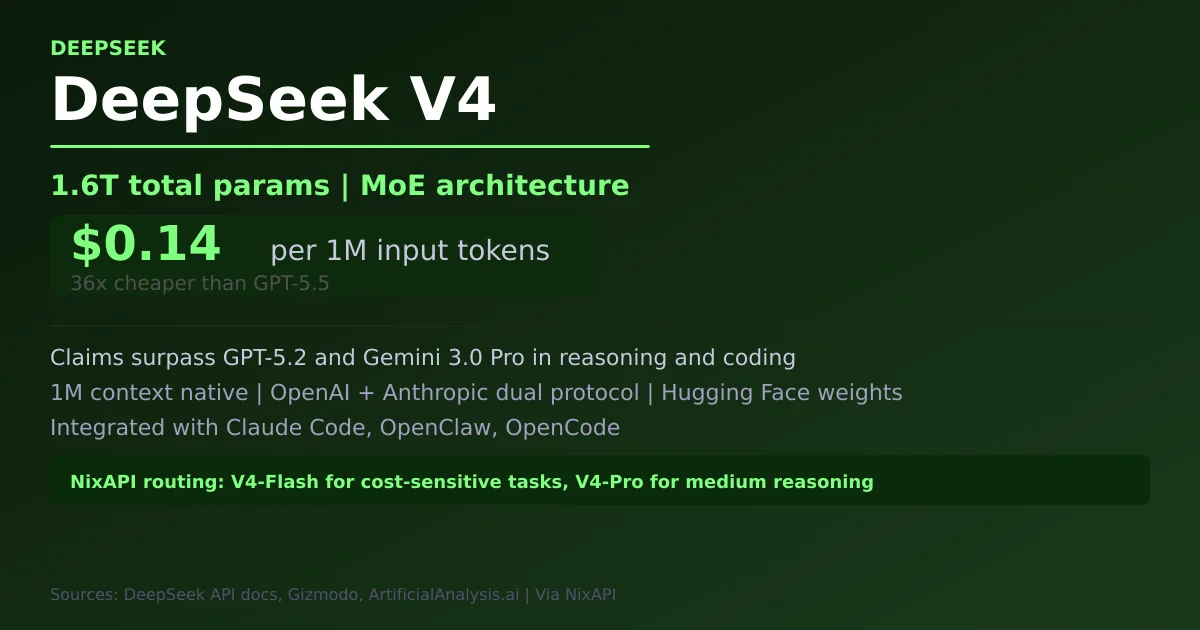

DeepSeek released V4 preview on April 24, 2026 with V4-Pro-Max at 1.6T total params (49B active) and V4-Flash at only $0.14/M input tokens. Claims to surpass GPT-5.2 and Gemini 3.0 Pro in reasoning and coding. Fully open-source with downloadable weights. This article tests V4 Flash API integration, benchmark performance, and compares costs vs GPT-5.5, Claude Opus 4.7, and Gemini 3.1 Pro.

Note: Data from DeepSeek official API docs (api-docs.deepseek.com), Gizmodo, ArtificialAnalysis.ai, Reddit r/LocalLLaMA. Integration guidance based on public API docs.

1. Launch: The Strongest Open-Source MoE Model

DeepSeek released V4 preview on April 24, 2026 with two models:

| Model | Total Params | Active Params | Architecture |

|---|---|---|---|

| DeepSeek-V4-Pro | 1.6 Trillion | 49 Billion | MoE |

| DeepSeek-V4-Flash | 284 Billion | 13 Billion | MoE |

DeepSeek’s official announcement: “DeepSeek-V4 is seamlessly integrated with leading AI agents like Claude Code, OpenClaw and OpenCode.” V4-Flash API is live; V4-Pro weights are fully open on Hugging Face.

2. API Pricing: $0.14/M Input, an 18× Price Advantage

Official DeepSeek V4 Flash pricing (confirmed by Gizmodo):

| Model | Input tokens | Output tokens | vs GPT-5.5 |

|---|---|---|---|

| DeepSeek-V4-Flash | $0.14 / 1M | $0.28 / 1M | 36× cheaper |

| DeepSeek-V4-Pro | ~$0.50-1/M | ~$1-2/M | ~5-10× cheaper |

| GPT-5.5 | $5 / 1M | $30 / 1M | baseline |

| GPT-5.5 Pro | $30 / 1M | $180 / 1M | 214× more expensive |

| Claude Opus 4.7 | $5 / 1M | $25 / 1M | 36× more expensive |

V4-Flash’s input price is ~36× cheaper than GPT-5.5 — making it ideal for cost-sensitive workloads without sacrificing capability.

3. Benchmarks: Can Open-Source Match Top Closed-Source?

DeepSeek official benchmark data:

| Benchmark | DeepSeek-V4-Pro | GPT-5.2 | Gemini 3.0 Pro | Note |

|---|---|---|---|---|

| Reasoning (Math/STEM/Coding) | SOTA open | close | close | Beats all open models |

| Agentic Coding | Open SOTA | — | — | Best among all open models |

| World Knowledge | Only behind Gemini 3.1 Pro | — | — | Strongest open model |

| Context Efficiency | World-leading | — | — | Token compression + DSA |

Key technical highlights from DeepSeek’s tech report:

“Novel Attention: Token-wise compression + DSA (DeepSeek Sparse Attention) — world-leading long context efficiency with drastically reduced compute and memory costs.”

1M context is now the default across all DeepSeek official services.

4. V4 Flash API Integration (via ArtificialAnalysis.ai)

DeepSeek V4 Flash provider comparison (ArtificialAnalysis.ai):

| Provider | Input price | Output price | Time to first token |

|---|---|---|---|

| DeepSeek official | $0.14/M | $0.28/M | 0.95s |

| APIYI and others | ~$0.14/M | ~$0.28/M | slightly higher |

DeepSeek official API endpoint: api.deepseek.com — supports both OpenAI ChatCompletions and Anthropic API protocols.

5. V4 Flash vs V4 Pro: Decision Framework

| Use case | Recommended | Why |

|---|---|---|

| Simple agent tasks | V4-Flash | Matches Pro performance, faster and cheaper |

| Complex reasoning / coding | V4-Pro | 49B active params, stronger reasoning |

| Long context (>100K tokens) | V4-Pro / Flash | 1M context native |

| High-stakes critical tasks | GPT-5.5 or Opus 4.7 | Closed-source models guarantee higher reliability |

| Chinese market / Chinese language | V4-Pro / Flash | Strong Chinese understanding, supports local deployment |

| Extreme budget sensitivity | V4-Flash | $0.14/M input, among the cheapest in the industry |

6. NixAPI Integration

// providers/deepseek-v4.ts

import OpenAI from 'openai';

const deepseek = new OpenAI({

apiKey: process.env.DEEPSEEK_API_KEY,

baseURL: 'https://api.deepseek.com/v1',

});

// NixAPI routing: DeepSeek as cost-priority layer

export async function routeTask(task: Task) {

// Cost-sensitive + simple tasks -> V4 Flash

if (task.costSensitive && task.difficulty === 'simple') {

return deepseek.chat.completions.create({

model: 'deepseek-v4-flash',

messages: task.messages,

max_tokens: 512,

});

}

// Medium complexity reasoning -> V4 Pro

if (task.difficulty === 'medium' && !task.costInsensitive) {

return deepseek.chat.completions.create({

model: 'deepseek-v4-pro',

messages: task.messages,

max_tokens: 1024,

});

}

// High-difficulty tasks -> Opus 4.7 or GPT-5.5

return opus47.chat(task.messages, { effort: 'high' });

}

7. Impact on NixAPI Routing Architecture

DeepSeek V4’s pricing ($0.14/M input) directly impacts NixAPI’s multi-model routing tier design:

| Tier | Model | Input cost | Use case |

|---|---|---|---|

| Free / minimum cost | V4-Flash | $0.14/M | Simple tasks, Chinese language, cost-sensitive |

| Mid-tier | V4-Pro / Sonnet 4.6 | $0.50-3/M | Medium reasoning, simple agent workflows |

| High-tier | Opus 4.7 / GPT-5.5 | $5/M+ | Complex coding, scientific research, high reliability |

DeepSeek V4 means NixAPI can offer near GPT-5 class capability at a fraction of the cost for budget-sensitive users. For the Chinese market specifically, DeepSeek’s Chinese language understanding and fully local deployable weights (via Hugging Face) make it an exceptionally strong option — no API key required if running locally.

8. Key Takeaway

DeepSeek V4 delivers near top-tier reasoning and coding capability at 36× lower cost than GPT-5.5, with 1M context native support and fully open weights. For NixAPI, V4-Flash is the natural choice for the “cost-priority tier,” with V4-Pro handling medium-complexity reasoning tasks. Together they form a “DeepSeek handles the baseline, top closed-source models handle the hard problems” layered routing architecture.

Try NixAPI Now

Reliable LLM API relay for OpenAI, Claude, Gemini, DeepSeek, Qwen, and Grok with ¥1 = $1 top-up

Sign Up Free